Patterns of emotional dynamics.

What makes humans create trust? What emotional patterns lead to purchase decisions? These are some of the questions that push the ongoing development of Pitch Patterns Emotion detection AI.

Scientific method

One of the key problems to determining which emotion is expressed during a conversation is the subjectiveness of how people read emotions. We overcome this by using a simple Positive Activation – Negative Activation (PANA) model that focuses on actionable metrics such as energy (strong or weak) and how the emotional expression feels (positive or negative).

Emotions

To achieve great customer experience we have trained machine learning models to detect emotional patterns from person’s tone of voice.

We look at biometrics of the voice not the language used in speech.

Pitch Patterns emotion classification is based on models that use nonverbal communication like tone of voice, laughter and others which are missed in speech-to-text systems.

Pitch Patterns use unbiased classification approach

Similarly as every person has a unique fingerprint we consider users unique personality type as weight that determine emotional patterns of the individual.

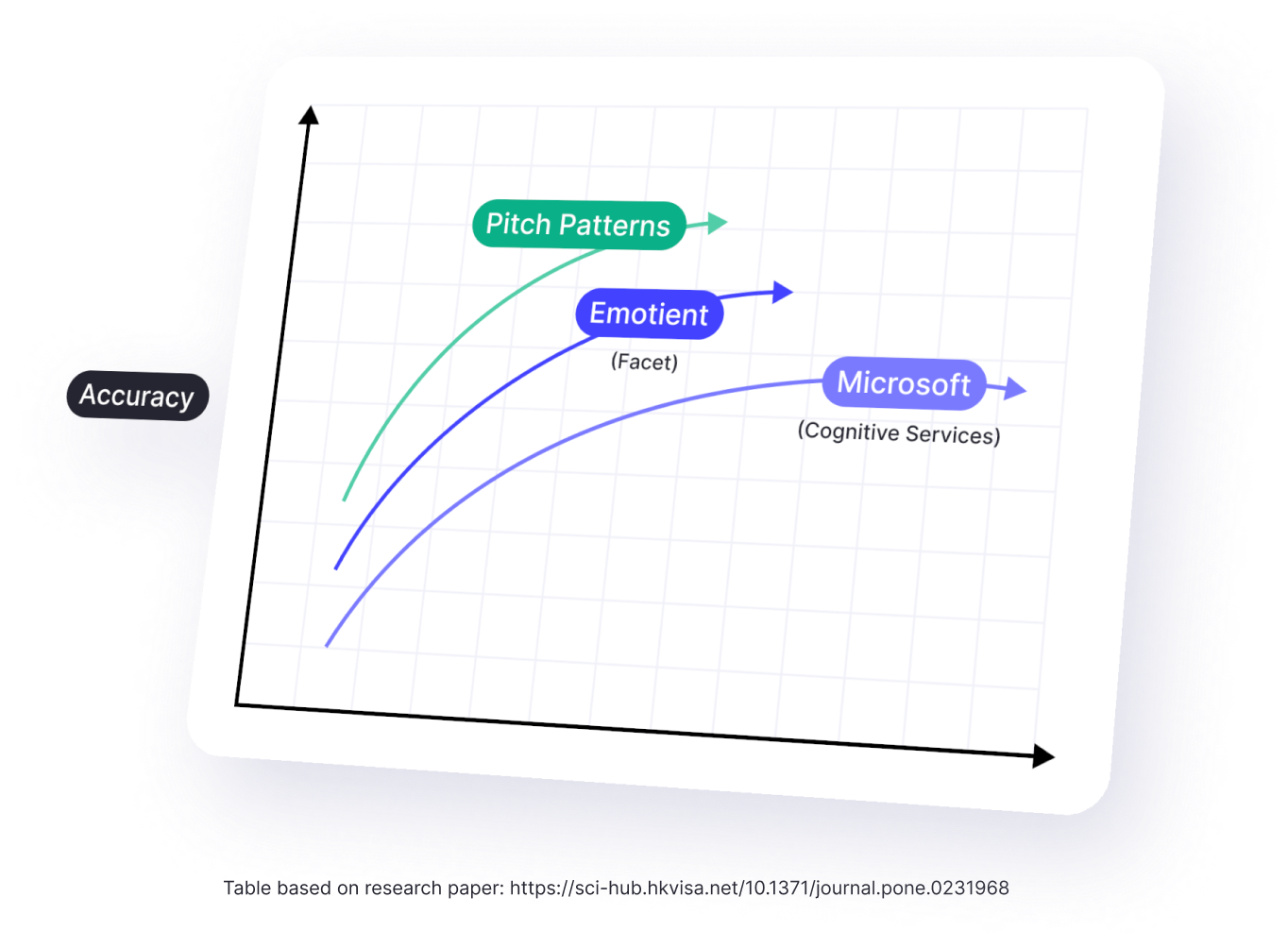

Accuracy

Pitch Patterns models deliver up to 65% emotion detection accuracy which is industry leading accuracy. This accuracy is based only on voice whereas others classify emotions based also on face, which is easier.

Actionable insights from day 1.

*By pressing “Request a demo” you agree to receive email notifications for informational purposes.